Infini Core delivers a unified computing platform combining enterprise-grade GPU centers with high-performance bare-metal servers. With flexible GPU resource scheduling and low-latency hardware, we support AI development, scientific computing, and data-intensive workloads from training to inference. Build faster, scale easier, and innovate with reliable, high-efficiency infrastructure.

Home / Solutions

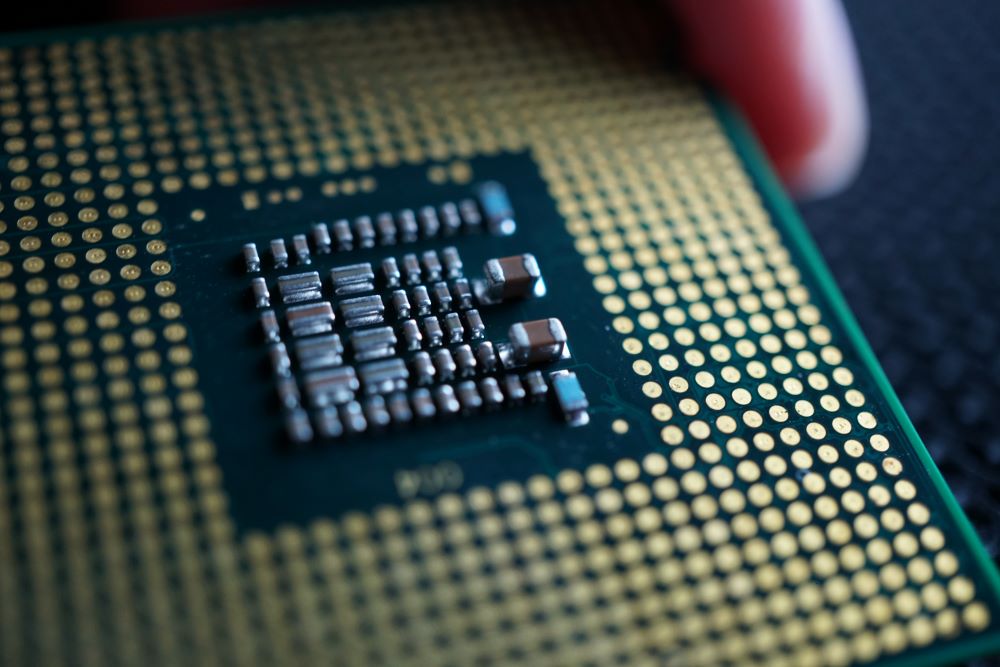

The GPU computing power pooling solution centralizes the management of multiple homogeneous or heterogeneous GPU servers, forming a unified GPU resource pool. Through an integrated resource management and scheduling system, GPU resources can be dynamically allocated and efficiently utilized.

Existing resources are reorganized and managed based on business service needs. Newly purchased hardware is planned according to configuration and scale, optimized for network and workload requirements, and grouped by chip type. To maximize resource efficiency, GPU resources are allocated according to parallel computing demands, including the coordinated including coordinated scheduling of high-speed GPU interconnects and low-latency cluster networking.

A distributed architecture is used to aggregate and manage diverse computing resources. This enables seamless integration, unified scheduling, and optimization of heterogeneous computing power. The platform supports rapid expansion, reduction, and deployment of resources to meet varying user requirements and application scenarios.

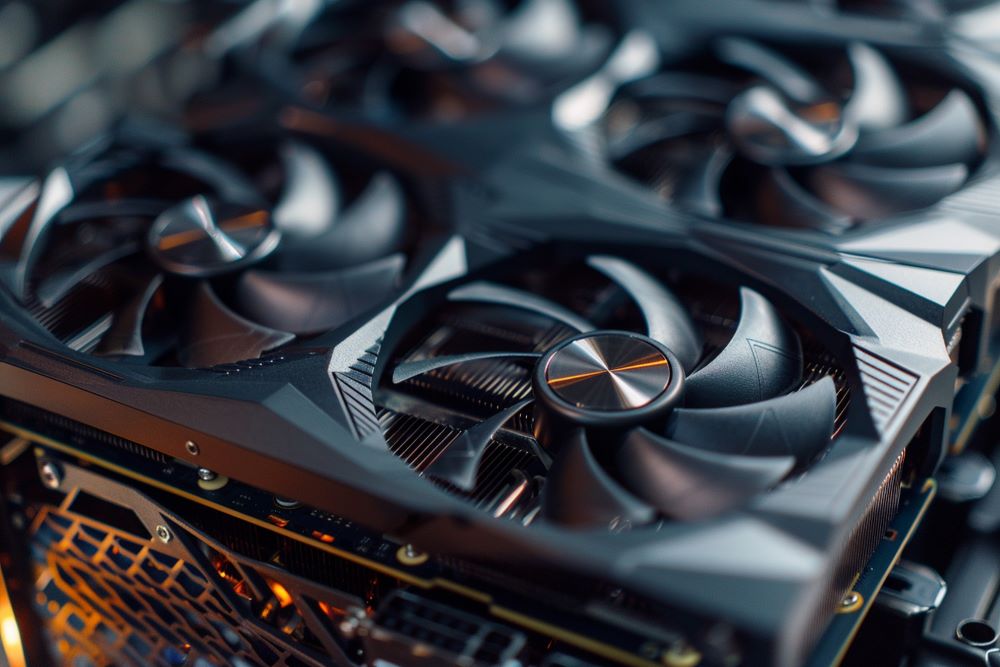

By leveraging multiple scheduling algorithms across different computing power types, the system delivers high-performance and highly reliable computing services. Distributed scheduling and multi-node orchestration ensure high availability and consistent performance across workloads., meeting the performance and stability requirements of a wide range of applications.

The platform supports flexible application, allocation, and use of different computing resource types. Users can deploy cloud hosts, AI computing power, or HPC computing power based on their needs, paying only for what they consume. This ensures scalable and adaptable computing capacity for tasks of any size or complexity.

A unified operations and maintenance platform significantly simplifies resource scheduling and system management. By reducing operational overhead, teams can focus more on business growth, product development, and innovation.

Streamlined Processes and Improved Efficiency:

The operation and maintenance workflow is significantly optimized, reducing manual intervention and configuration errors, which directly boosts overall work efficiency.

Intelligent O&M That Enhances Team Productivity:

With features like data analysis, predictive alerts, and automated fault recovery, the team can clearly understand system conditions, maintain stable operations, and address issues as soon as they arise.

Flexible Resource Allocation and Cost Optimization:

Using resource pools, vGPUs, and fine-grained permission controls, the system adapts quickly to changing business needs, accurately distributes resources, prevents waste, and significantly lowers O&M costs.

Flexible Computing Environment for Innovation:

Provides a convenient and scalable computing environment that removes tedious application procedures and resource constraints, allowing engineers to focus fully on algorithm optimization and innovation.

Accelerated Iteration and Faster Project Delivery:

End-to-end process optimization helps algorithm engineers move projects forward efficiently — from model training to deployment — making each step smoother and speeding up product launch cycles.

Stable, Reliable Support for Safe Innovation:

Multi-node collaboration and advanced scheduling algorithms ensure stable system performance even under high loads, providing a dependable development platform for continuous and secure innovation.

These versatile servers can be configured to meet a wide range of application needs. You can select single or dual processors, choose your preferred RAM capacity, RAID configuration, and storage type. GPU options are also available to further enhance performance.

Built for high availability and fault tolerance, these clusters use a proprietary resilient architecture that supports high-throughput, low-latency data exchange between servers within a secure VLAN environment.

With high-speed connectivity through private network, you can securely link your clusters with solutions hosted across our global network of data centres, ensuring smooth and secure system integration.

Built on a high-bandwidth, low-latency private network, the infrastructure enables fast and reliable communication between servers within secure VLAN environments. It is designed to support modern AI workloads, distributed training, data lakes, and seamless integration with cloud-based services.

Powered by high-performance NVMe storage architectures, the platform delivers ultra-low latency and high throughput to accelerate databases, real-time analytics, search engines, data processing pipelines, and other data-intensive applications.

The platform supports modern AI and machine learning frameworks such as PyTorch, TensorFlow, and JAX, along with widely adopted ecosystem tools including ONNX Runtime. Optimized libraries like cuDNN, NCCL, and TensorRT ensure efficient training and inference across diverse AI workloads.

All major storage types—including SAS, SATA, and NVMe—support hot-swapping, allowing storage expansion or drive replacement without system downtime. This ensures continuous availability and operational stability for mission-critical workloads.

Dedicated customer service and technical specialists ready to assist you throughout your projects.

A team of experts available to guide you through planning, deployment, and optimisation of your solutions.

A global network of trusted partners, providing additional expertise and resources to support all your project needs.

Delivering reliable, high-performance infrastructure solutions designed for modern enterprises. We empower businesses with secure, scalable, and future-ready technology built to meet the demands of today and tomorrow.

Copyright © 2025 INFINI CORE PTE. LTD. All rights reserved.